Using DataSync to perform the initial copy and all incremental changes.In this post, I dive deep into copying on-premises data to an S3 bucket by exploring the following three distinct scenarios, each of which will produce a unique result:

Depending upon which storage class was used for the initial transfer, this could result in unexpected costs.

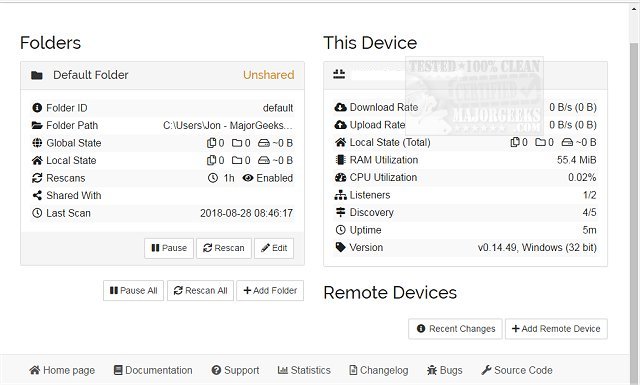

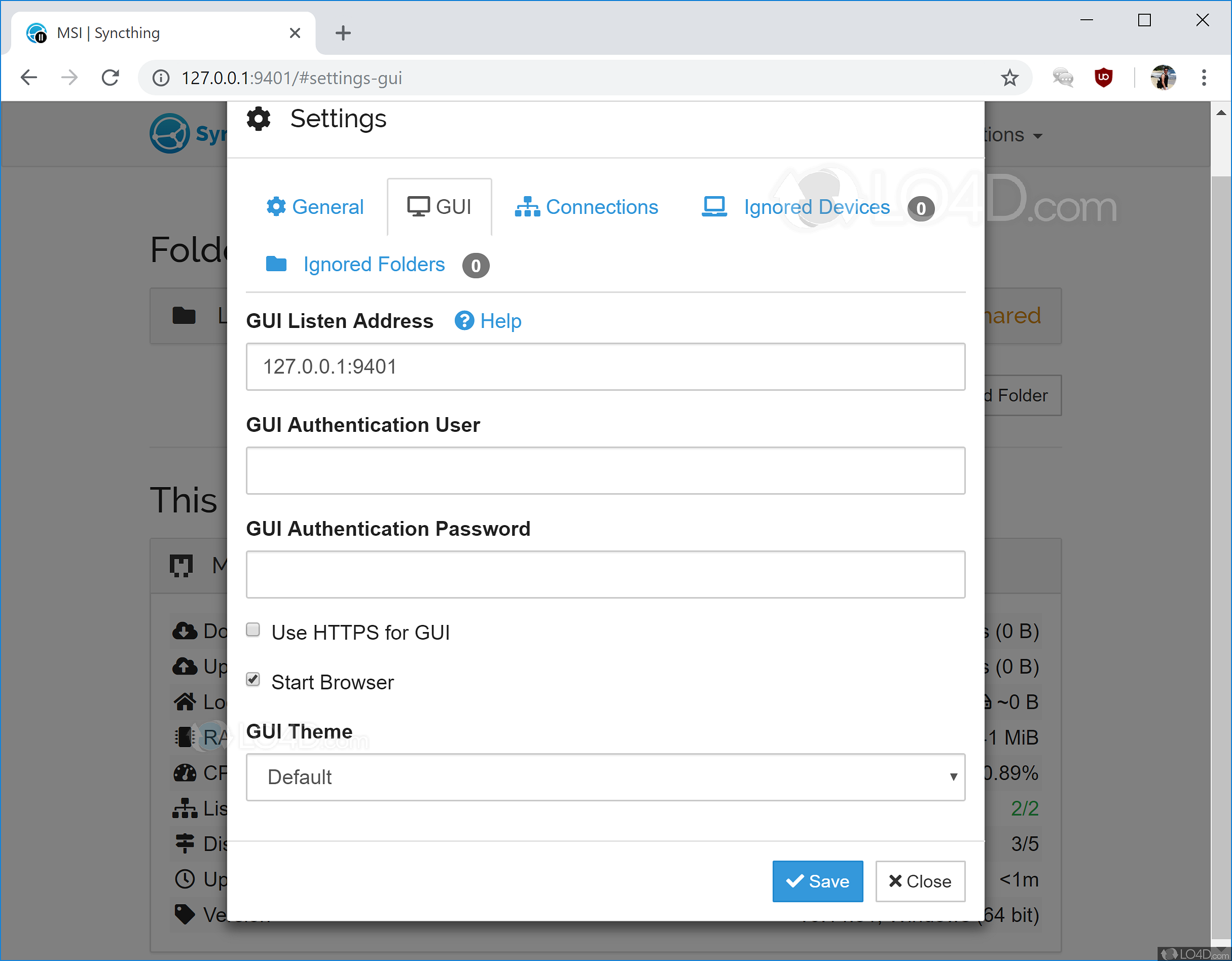

In that case, DataSync will need to perform additional operations to properly transfer incremental changes to S3. If the data was transferred using a utility other than DataSync, this metadata will not be present. DataSync uses object metadata to identify incremental changes. To avoid additional time, costs, and bandwidth consumption, it is important to fully understand exactly how DataSync identifies “changed” data. How will DataSync respond when copying data to an S3 bucket than contains files that were written by a different data transfer utility? Will DataSync recognize that the existing files match the on-premises files? Will a second copy of the data be created in S3, or will the data need to be retransmitted? In this type of scenario, where data is first copied to S3 using one tool and incremental updates are applied using DataSync, there are a few questions to consider. Sometimes, those same customers then use AWS DataSync to capture ongoing incremental changes. I often see cases in which customers start with a free data transfer utility, or an AWS Snow Family device, to get their data into S3.

There are many factors to consider when migrating data from on premises to the cloud, including speed, efficiency, network bandwidth and cost. A common challenge many organizations face is choosing the right utility to copy large amounts of data from on premises to an Amazon S3 bucket.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

November 2023

Categories |

RSS Feed

RSS Feed